Depth perception becomes possible using AR technology among individuals with single eye blindness.

Depth perception becomes possible using AR technology among individuals with single eye blindness.

New software that has been implemented into augmented reality goggles that has allowed individuals with one eye to experience depth perception by projecting the additional images for the healthy eye.

This provides the wearer of the AR glasses with an enhanced perception of depth.

Being able to judge three dimensional distances has, until now, been a capability that has been limited to individuals who have two functioning eyes. This helps people to be able to judge the distance between two objects. For example, it allows you to judge the distance from your fork to your plate, or from the front of your car to the truck in front of you in traffic. When one eye functions, that binocular depth perception is lost.

New augmented reality glasses have now been designed which can help to overcome this issue.

A team in Japan’s University of Yamanashi has now constructed exactly this type of device. They have produced a form of augmented reality goggles that artificially produces a depth perception feeling in the healthy eye of the wearer.

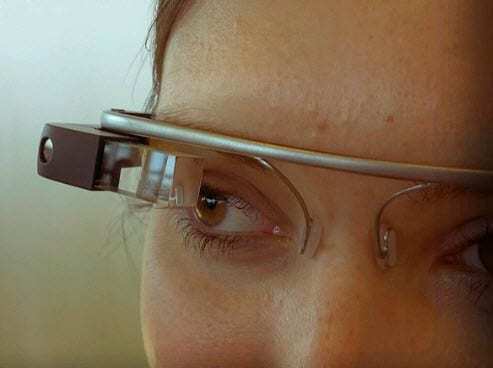

The researchers, led by Xiaoyang Mao, used a pair of traditional 3D glasses that are commercially available (produced by Vizux Corporation, called Wrap 920AR). In fact, that same manufacturer is also building an augmented reality headset, which they have labeled the M100 and that appears to offer considerable competition to Google Glass.

The Wrap 920AR augmented reality goggles look like a standard pair of sunglasses, except that there are camera lenses directed out of each glass. The user can see through the transparent lenses which both capture and project the images that the wearer should see, with computer assistance.

The Yamanashi research team developed augmented reality software that uses both of the cameras on the glasses so that when the glasses are worn, the cameras are able to capture what would be seen by each eye. Those images are transmitted to a computer, which uses the software to combine the perspective provided by both of the cameras, in order to produce a “defocus” effect. This way, some objects remain crisply focused while others have less focus applied, thereby generating a perception of depth. This final image is projected into the wearer’s working eye.